Insights for Federal Innovators

Velocity

Convergence Is Everywhere

Missions of national priority depend on technical fusion for speed, value, and outcomes—calling for the creative blending of capabilities and domains that were otherwise separate and distinct. How will technical convergence change the game for border security, critical infrastructure, space dominance, and more? And how can federal enterprises use contemporary environments to unleash convergence at scale? Read on to engage with a range of perspectives and insights that explore the power behind convergence.

Featured Content

Dive into the latest insights, emerging trends, and fresh perspectives from our current issue.

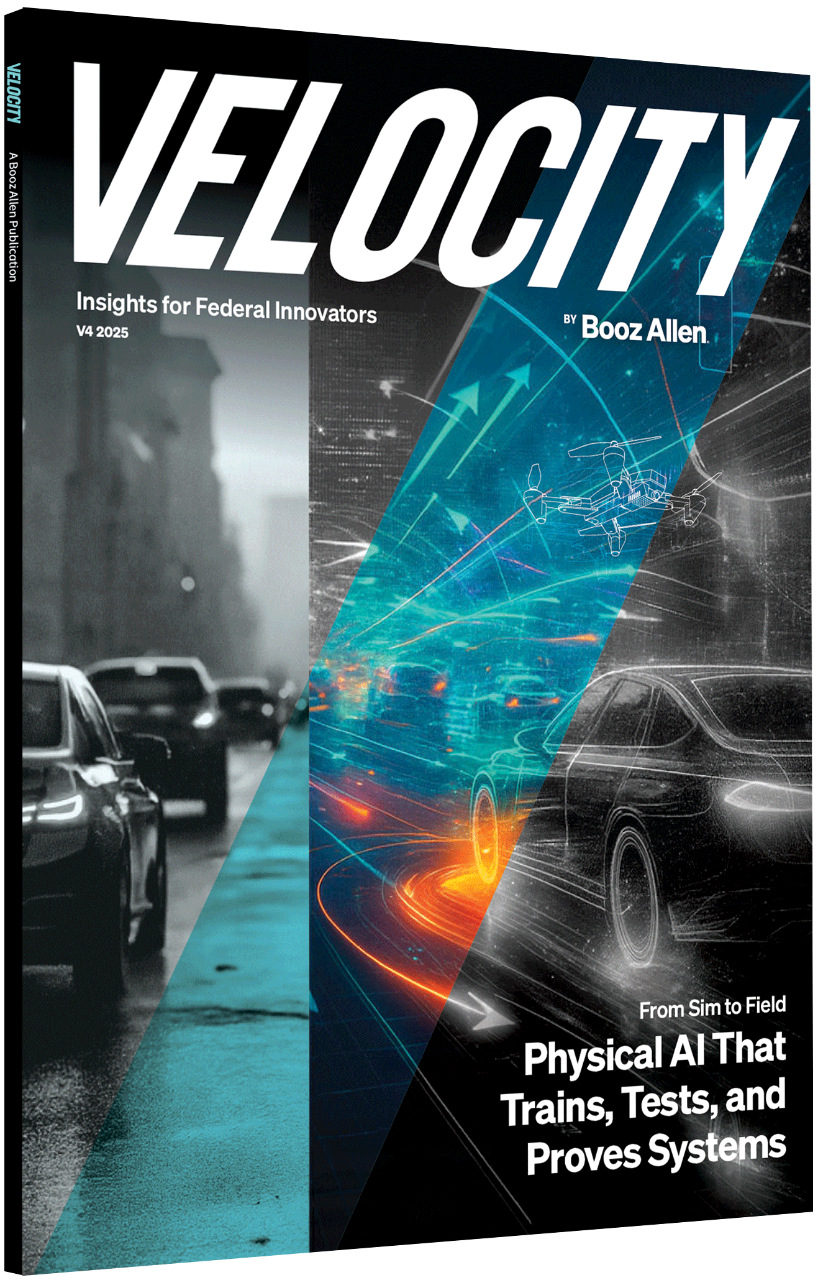

Cover Story

Sim to Field: Physical AI Trains and Proves Systems

Learn how physical AI enhances the reliability and safety of AI systems by using high-fidelity simulations and digital twins for real-world conditions and continuous improvement.

Autonomy at the Tactical Edge

Shield AI's Brandon Tseng discusses AI-powered warfare and how autonomous systems are transforming military operations.

Traveling Light: Silicon Photonics

Booz Allen researchers examine how silicon photonics revolutionizes data transfer, enhances AI, and shapes U.S.-China tech competition.

Mission Spotlight

Agentic Software Development Decoded

Learn how federal IT teams can use agentic AI to speed up software delivery, modernize legacy systems, and meet mission demands faster.

AI-RAN and the Race to 6G

Discover how AI-RAN technology optimizes network performance and serves as the foundation for the future 6G stack.

Building a Better Innovation Factory

Booz Allen's Haluk Saker shares how a trusted cloud environment addresses real-world DevSecOps needs for federal projects.

Final Thoughts

Accelerating Mission Outcomes Through Tech Investment

by Matt Calderone, Former Chief Financial Officer

Learn how Booz Allen leverages tech investment and AI partnerships to ensure American leadership in critical sectors and innovations.

Best of Velocity

Explore our most popular articles from past editions of Velocity.

Velocity Archive

Download full editions of previous Velocity issues to discover more insights.