Artificial intelligence (AI) agents are expected to work autonomously or semi-autonomously, making decisions, executing complex tasks at lightning speed, and often delivering insights and efficiency that surpass human capabilities. They promise to revolutionize how work gets done—but only if they don’t get lost or confused along the way.

The challenge? The underlying large language models (LLMs) often work in an information vacuum, cut off from the resources and context needed to create real value. AI agents are designed to fill this void, provided they are equipped to behave predictably, efficiently, and repeatably.

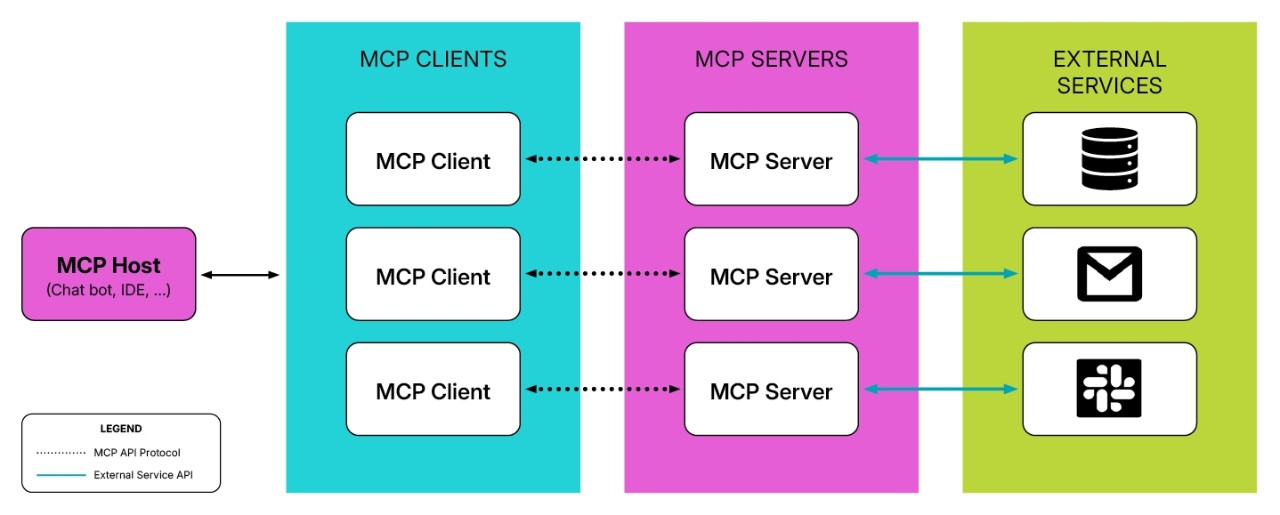

Specifically, AI agents need seamless interoperability with enterprise systems, data repositories, and other agents. Anthropic’s Model Context Protocol (MCP), Google’s Agent2Agent (A2A), and other emerging protocols address this by standardizing how agents gather, interpret, and act on external information, helping to move deployments beyond one-off prototypes.

As we will explore, the impact of these new standards could mirror how standardized application programming interfaces (APIs) transformed the internet, allowing e-commerce and extended supply chains to take off. For agentic AI, protocols like MCP or A2A could do the same, paving the way for new business models, such as digital workers that use advanced reasoning to navigate and execute complex business processes.